yesterday our API (the API of qr.cx) returned rubbish for about 12 hours. i apologize for that, this will not happen again. we are working on a reimplementation which should be far more reliable.

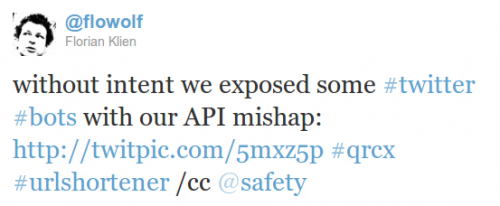

however the thing had an upside. we were able to expose twitter bots who published this rubbish without checking. in total we found 336 twitter bots who did so. they included

however the thing had an upside. we were able to expose twitter bots who published this rubbish without checking. in total we found 336 twitter bots who did so. they included

1 | <br /><b>Notice</b>: Undefined variable: [...] in <b>/[...]/qr.cx/htdocs/api/index.php</b>[...]" |

in their tweets. a human being would not do that. firstly the API is made for automated use, so why would one use that on a regular basis; secondly the error is apparent to a human user. one would not publish a tweet with the full nonsense. the bots did.

so now we can search twitter for this perfidious string and see which account is a bot. this is good, this could help twitter™ to identify malicious users/bots and protect their normal human users.

but it also helps us, the urlshortener, to safeguard the system. we can identify spam links. we can search the twitter bot’s stream for links it has shortened before. those links are most likely links to spam or fraudulent pages. disabling those would be no harm.

i’m looking forward to implementing these security features. it will definitely require a little more thinking to setup a nice safe system.